X-ScaleAI-DPU is a high performance solution to accelerate CPU-based distributed DNN training by utilizing the capabilities of data processing units (DPUs).

Features

- Exploiting HPC Technologies for CPU-based deep learning.

- Offload DNN training tasks to the DPU

- Support for DNN checkpointing with DPUs (New)

- Support for BlueField-3 DPUs (New)

- User friendly Python interface to run DL applications on the CPU and DPU

- Fine-tuned MPI library for CPU and DPU systems

- Distributed Training with Pytorch using Horovod

- “Out of the box” optimal performance on CPU+DPU platforms

- Tested on several DNNs and datasets with up to 19% improvement in DNN training performance without checkpointing. (New)

- Up to 33% improvement in epoch time with checkpointing (New)

- Simple installation and execution in one command

- Coming soon: support for more system configurations

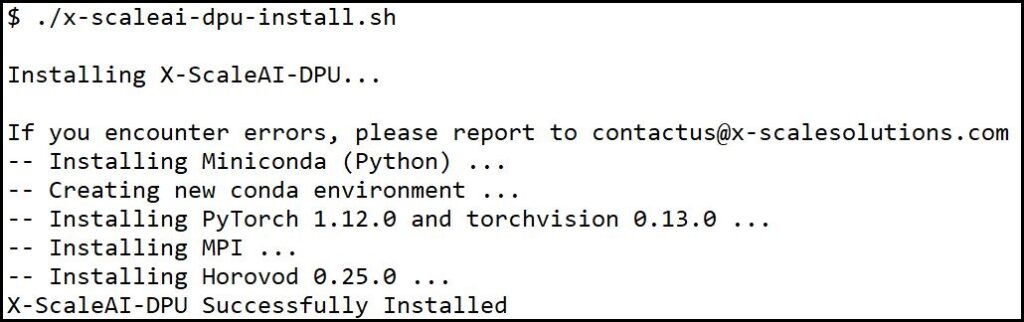

Installation

X-ScaleAI-DPU offers a one-command installation process.

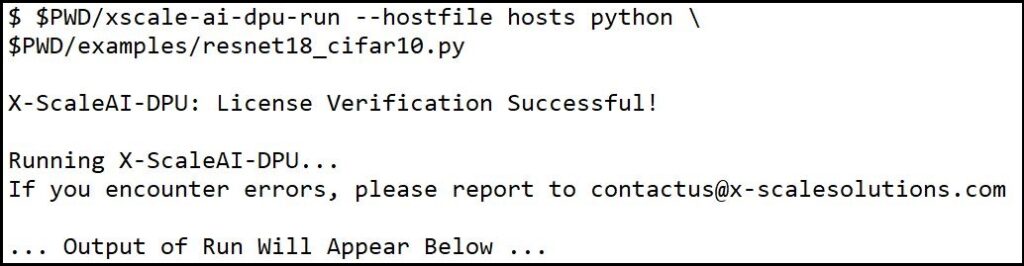

Sample Run

X-ScaleAI also offers a simple run command.

Performance Evaluation

System Configuration

- Two Intel(R) Xeon(R) 16-core CPUs (32 total) E5-2697A V4 @ 2.60 GHz

- NVIDIA BlueField-3 SoC, NDR200 200Gb/s InfiniBand adapters

- NVIDIA BlueField-2 SoC, HDR100 100Gb/s InfiniBand adapters

- Memory: 256GB DDR4 2400MHz RDIMMs per node

- 1TB 7.2K RPM SSD 2.5″ hard drive per node

- NVIDIA ConnectX-6 HDR/HDR100 200/100Gb/s InfiniBand/VPI adapters with Socket Direct

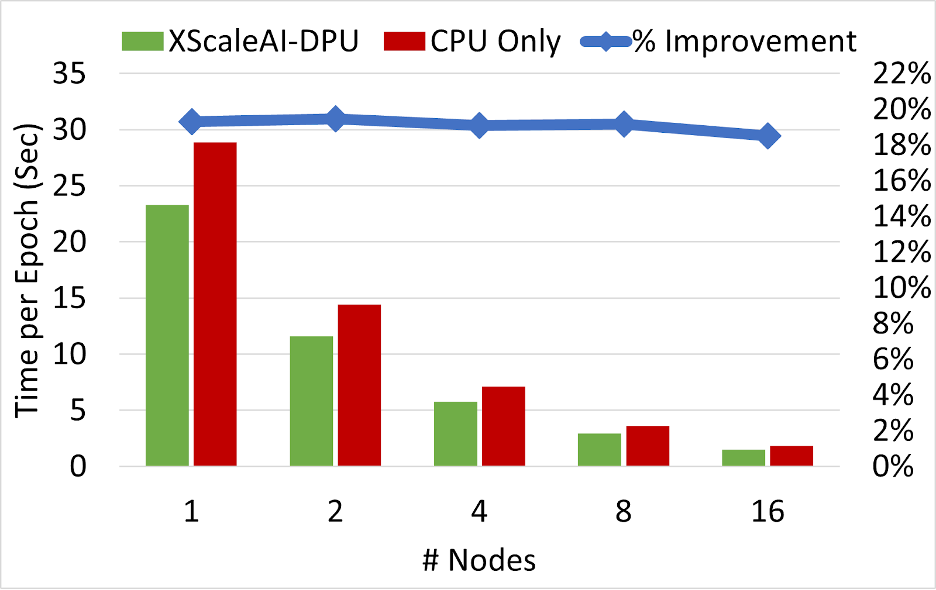

1. XScaleAI-DPU improvement for DNN training

- Up to 19% improvement in training performance using X-ScaleAI-DPU

- Support for both BlueField-2 and BlueField-3

- Performance improvement across different DL models and datasets

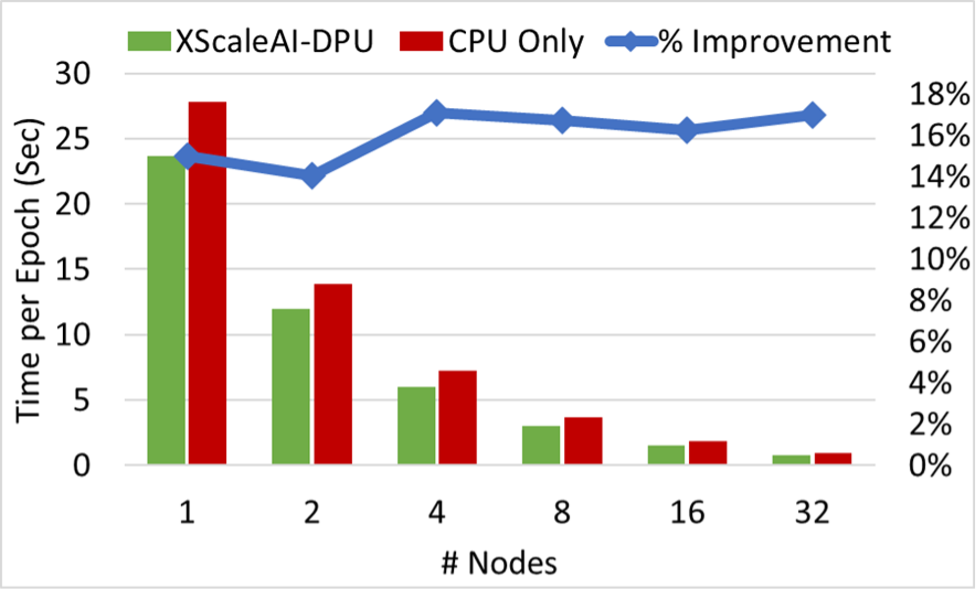

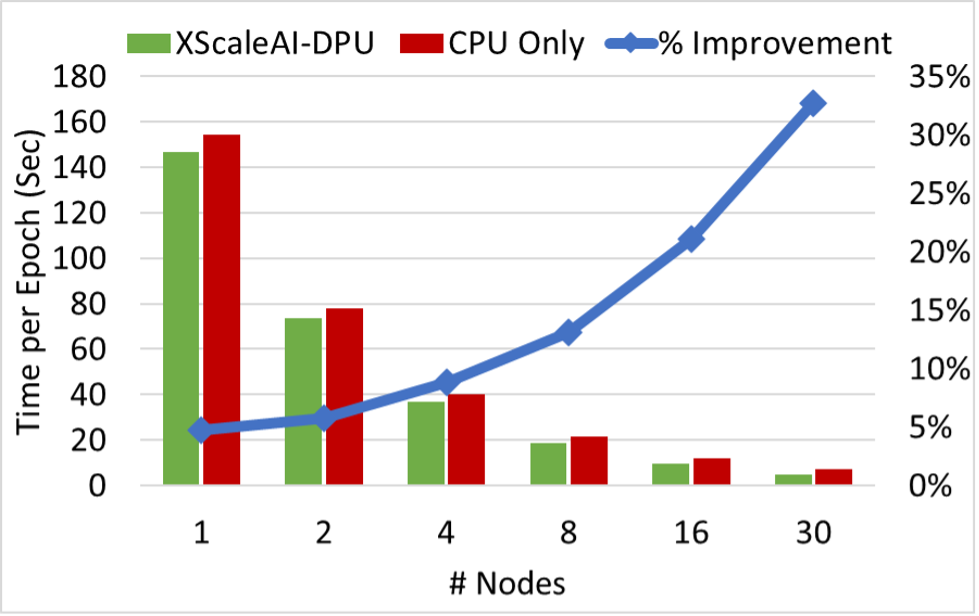

2. XScaleAI-DPU improvement for DNN training with checkpointing

- Up to 33% improvement in epoch time on the ResNet-34 model using X-ScaleAI-DPU compared to CPU only.

- Improvement percentage using X-ScaleAI-DPU for checkpointing increases as number of nodes increases.

- Performance improvement across different DL models and datasets

Contact

Interested in a free trial? Email us at contactus@x-scalesolutions.com.